Introduction

The AI community has long evaluated multimodal foundation models (MFMs) as passive spectators: can the model report that "the red cup is to the left of the keyboard" in a static image? Recent work moves well beyond this, testing spatial reasoning over videos1 1 Thinking in Space: How Multimodal Large Language Models See, Remember, and Recall Spaces (Yang et al., 2024) 2 2 Cambrian-S: Towards Spatial Supersensing in Video (Yang et al., 2025) . These efforts have substantially advanced spatial perception. What remains less explored is spatial memory: the ability to build and maintain a grounded, continuous 3D world model across continual interaction and experience, well beyond any single observation window.

Spatial memory is distinct from temporal memory. Temporal memory tracks the order of events; spatial memory demands that an agent construct a volumetric world model and keep it coherent across motion and interaction. Cognitive science tells us that humans do this with two coordinated systems: egocentric and allocentric representation3 3 Spatial Memory: How Egocentric and Allocentric Combine (Burgess, 2006) . Egocentric representation is body-centered and action-oriented; it updates with every step and answers where am I? Allocentric representation is environment-centered and persistent, an objective map of how things relate to one another regardless of the observer's pose.

A clean example shows why both are needed: on vacation abroad, you can mentally walk through your home neighborhood from any imagined viewpoint. This cannot rely on egocentric updating alone, since you didn't measure those distances in transit. Your brain retrieves a stored allocentric map and projects it into an imagined egocentric frame. If we expect MFMs to serve as the brain of embodied AI, they need the same two representations working in concert: an allocentric map of how the world is structured, and egocentric awareness of where they stand within it.

The Allocentric Map: Spatial Representations

The allocentric system, as introduced above, provides the persistent component of spatial memory: an enduring map that outlives any single observation. For an agent operating in the physical world, what this map should contain depends on the task. For exploration and navigation, coarse geometry and topology suffice. Recent work on cognitive maps for VLMs4 4 Spatial Mental Modeling from Limited Views (Yin et al., 2025) shows that models can build structured beliefs about object positions and orientations from multi-view observations, answering questions like where is the object? and where should I explore next? This is a useful allocentric representation, but it is tuned to a navigator's needs.

Household robots face a harder problem. A mobile manipulator must not only navigate to the microwave but also pull its door open. This coupling of navigation and manipulation means that object-center coordinates alone are insufficient: the map must also encode which parts are interactive, how they move, and what state they are in. The granularity of the allocentric map, in other words, is set by the most demanding action the agent needs to perform.

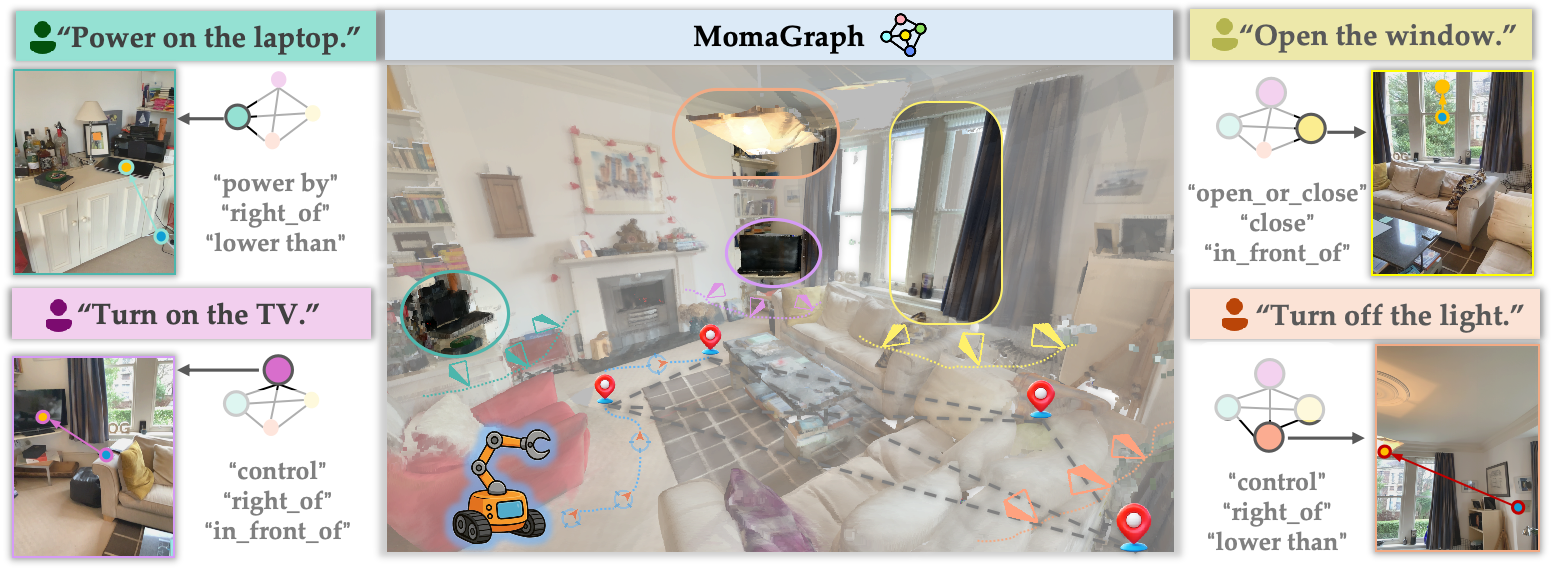

This is the motivation behind state-aware unified scene graphs5 5 MomaGraph: State-Aware Unified Scene Graphs with Vision-Language Model for Embodied Task Planning (Ju et al., 2025) . Rather than maintaining separate spatial and functional representations, a single graph jointly encodes spatial layout, functional relations, and part-level interactive elements (handles, knobs, buttons), each carrying mutable state (door open, light off). The key design choice is that the graph is not a passive description of the scene; it is constructed for a given task, and planning is done over it. World modeling and action planning share the same structure. Under a graph-then-plan framework, the VLM first builds a task-oriented scene graph from visual observations, then plans directly on that graph, so the allocentric map doubles as the action space.

These are different resolutions of the same allocentric memory: a persistent, observer-independent model of the environment that can be queried and updated as the agent acts. Navigation demands coarse topology; manipulation demands part-level affordances with mutable state. And when the map itself becomes the substrate for planning, spatial memory is no longer just about remembering where things are, it is about remembering how to act on them.

The Egocentric Self: Situated Awareness

An allocentric map, no matter how rich, is inert without a reader who knows where they stand in it. This is the role of egocentric spatial memory: continuously tracking the agent's own position, orientation, and motion relative to the environment. In cognitive science, this tracking depends on path integration, accumulating local self-motion cues into a running estimate of one's pose. When this estimate drifts, the agent's entire spatial understanding breaks down.

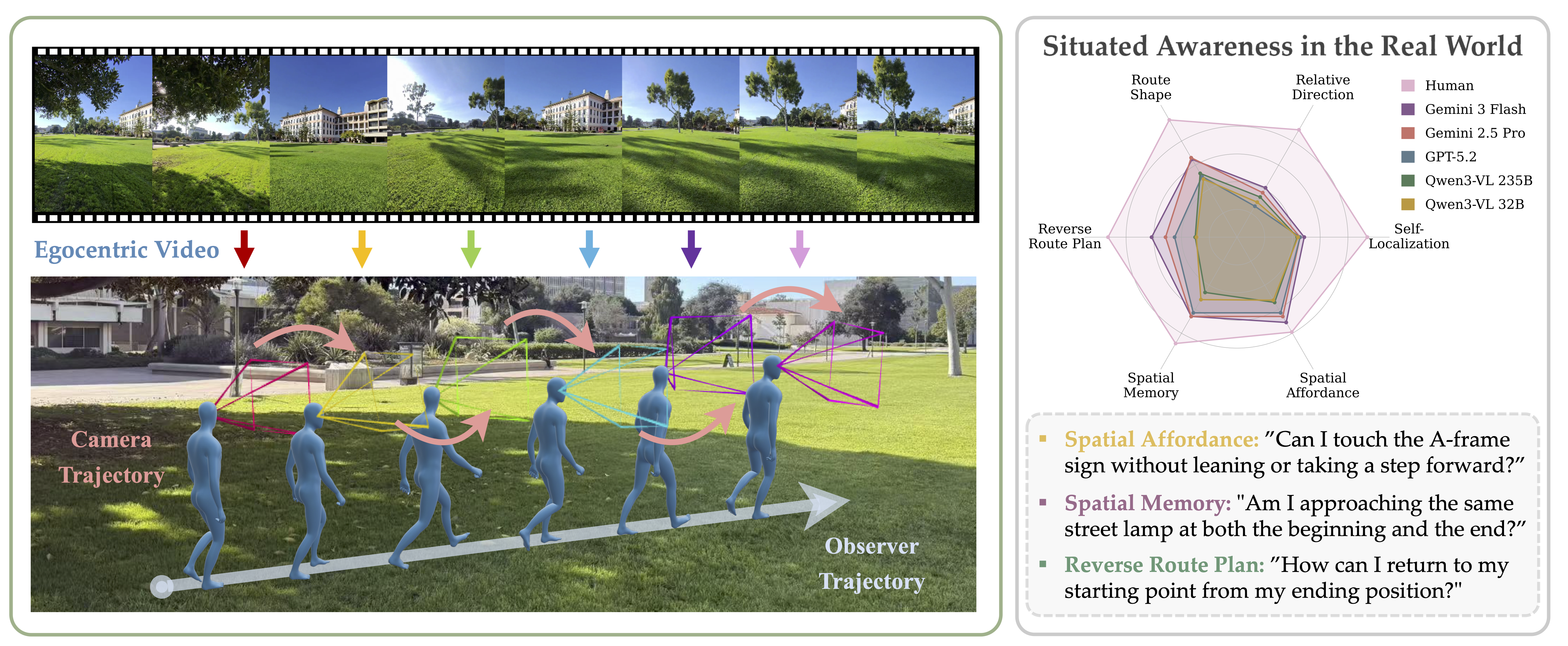

Most existing spatial benchmarks implicitly cast the model as a disembodied third-person observer, evaluating environment-centric relations (the distance between two objects) rather than observer-centric ones (which way do I need to turn?). Recent work on situated awareness6 6 Learning Situated Awareness in the Real World (Li et al., 2026) exposes this blind spot using continuous egocentric video captured with Ray-Ban Meta smart glasses across real indoor and outdoor environments. The result is a 37.66% performance gap between humans and the best MFM. More importantly, the failure modes are diagnostic.

Models consistently confuse camera rotation with physical translation. In a controlled test, an observer walks a straight line while frequently turning their head; models misclassify the trajectory as a zigzag, reading head pans as body displacement. This error compounds: as trajectories grow more complex, model accuracy degrades sharply while human performance holds steady. What is missing is egocentric spatial memory: a running self-motion estimate that separates where you are looking from where you are going.

The second failure cuts deeper. Once an object leaves the field of view, models tend to treat it as gone. This is not just a perception limitation; it exposes the absence of spatial memory at the world-model level. A system that truly remembers space would know the chair behind it still exists even when the camera faces forward. Without that, every head turn risks erasing the scene the model just observed. The allocentric map from the previous section has no one to read it.

Why Frontier Models Struggle

The previous sections examined the allocentric and egocentric halves of spatial memory separately. But spatial memory is not two systems running in parallel; it is their continuous alignment. Egocentric motion updates write into the allocentric map; the allocentric map projects back into the egocentric frame to guide action. Current MFMs fail at both sides, and have no mechanism to connect them.

The allocentric side breaks early. Contrastive pretraining induces a bag-of-words shortcut7 7 When and Why Vision-Language Models Behave like Bags-of-Words, and What to Do about It? (Yuksekgonul et al., 2023) where vision encoders treat patches as an unordered set, capturing what is present but discarding where it is. Attention makes it worse: during spatial reasoning, models attend to learned semantic priors rather than actual geometric layout8 8 Why Is Spatial Reasoning Hard for VLMs? An Attention Mechanism Perspective on Focus Areas (Chen et al., 2025) , guessing relations from statistical habit rather than grounding them in the image.

The egocentric side, as we saw, cannot maintain a coherent self-motion estimate across frames. But these two sets of deficits do not simply add up; they multiply. Spatial memory requires each side to anchor the other: egocentric updates keep the allocentric map current, and the allocentric map tells the egocentric system where it is. When the allocentric input is spatially degraded and the egocentric tracker is drifting, there is nothing stable for either to hold onto. Each frame compounds the error from the last.

This is not simply a data problem. Models trained on passive video, whether through different training objectives, can learn rich spatial structure. But spatial awareness is not the same as spatial memory. Predicting what comes next given a window of frames is different from maintaining a persistent sense of where I have been, what persists behind me, and where I am now. What may be missing is a latent representation that accumulates spatial state across time, rather than re-inferring it from each new observation. Whether this is an architecture problem, an objective problem, or both remains open.

Closing the Loop

Embodied AI is converging on foundation models as the cognitive backbone. Whether the approach is end-to-end vision-language-action models9 9 Magma: A Foundation Model for Multimodal AI Agents (Yang et al., 2025) 10 10 EgoVLA: Learning Vision-Language-Action Models from Egocentric Human Videos (Yang et al., 2025) , vision-language navigation11 11 NaVILA: Legged Robot Vision-Language-Action Model for Navigation (Cheng et al., 2025) , or dual systems that pair a VLM planner with a low-level policy, the upstream spatial intelligence of the MFM sets the ceiling for what the downstream agent can do in the physical world.

These paradigms differ in architecture and training, but they converge on the same requirement: spatial modeling that persists through action. The moment an agent acts, the scene changes, the viewpoint shifts, and the model must update its representation accordingly. This is not a matter of better perception; it is a matter of memory. Until MFMs can maintain a persistent, grounded representation of the physical world while staying located within it, the transfer from visual understanding to physical action will remain brittle.

Throughout this blog, we have asked why frontier models struggle with physical space, and our answer centers on a specific gap: spatial memory, the continual alignment of allocentric and egocentric representations. The broader question is how to build models that maintain a coherent world representation across extended physical interaction, rather than reconstructing space from scratch at every frame. That question is not answered here, but we hope this framing points toward it.